Advanced Autonomous Systems

Cutting-Edge Technology for Autonomous Flight

Aurora is advancing autonomous decision-making capabilities and human-machine interaction across commercial and defense applications. We develop new technology for decision making in complex, multi-vehicle scenarios and apply our expertise in AI/ML, GNC, and robotics to rapidly iterate and prototype on real air vehicles.

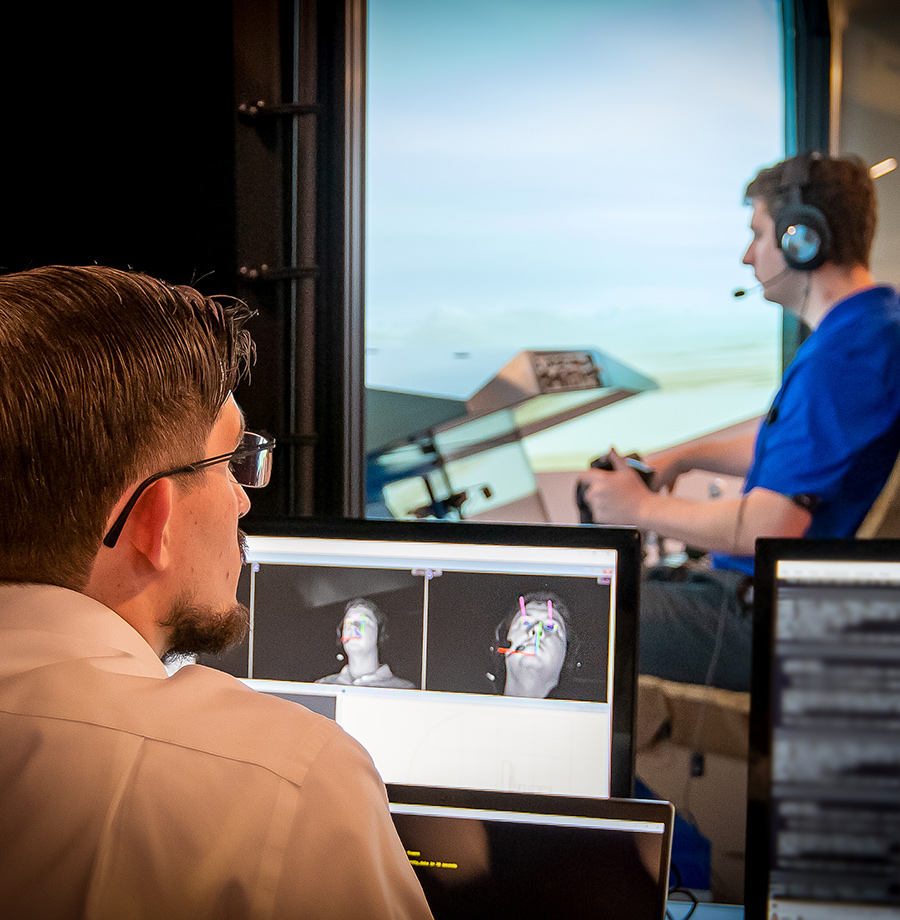

Human-Machine Teaming

We put experienced aviators in teams with unmanned systems to improve safety, enable new capabilities, and increase trust in autonomy. This work combines new human-autonomy interaction methods, autonomous advancements, and rigorous study on technology’s role in mission success.

Perception

Our advanced perception technologies, including detect and avoid and computer vision, enable autonomous flight across platforms from small UAS to urban air mobility.

Guidance, Navigation and Control

Guidance, navigation, and control (GNC) is core to the operation and integration of autonomous air vehicles. Aurora’s GNC team has a broad reach across internal and customer vehicle programs in the commercial and defense industries.

Early-Stage Technology Development

Aurora partners with well-respected university research programs and government labs to develop technology at the cutting edge of AI and machine learning. Example programs include: